HIGHLIGHT: Is Emerging Tech “Too Innovative” to Regulate? Lessons from the Titan Tragedy

A cautionary, industry tale about the risks of bypassing safety and ethical standards in emerging technologies

This article references the Titan submersible tragedy as a framework for exploring safety and responsibility in technology development. We share this comparison with deep respect and continued sorrow for the lives lost and the families still grieving. Our intent is to reflect on what can be learned — never to diminish the gravity of that event.

In the summer of 2023, the world watched as the Titan submersible went missing during a dive to explore the Titanic wreck.

The outcome was tragic: the vessel had catastrophically imploded, and all five passengers aboard were lost.

As investigations unfolded, it became clear that the Titan had not undergone voluntary safety certification — a process widely accepted as best practice in the deep-sea exploration sector.

The company behind the Titan, OceanGate, had opted out of industry-recognized safety certification, citing concerns that the process might slow down innovation.

That opt-out decision inevitably sparks much reflection today and makes it nearly impossible to not wonder about what would have happened (or not) had OceanGate made a different safety decision(s) instead.

A shared dilemma

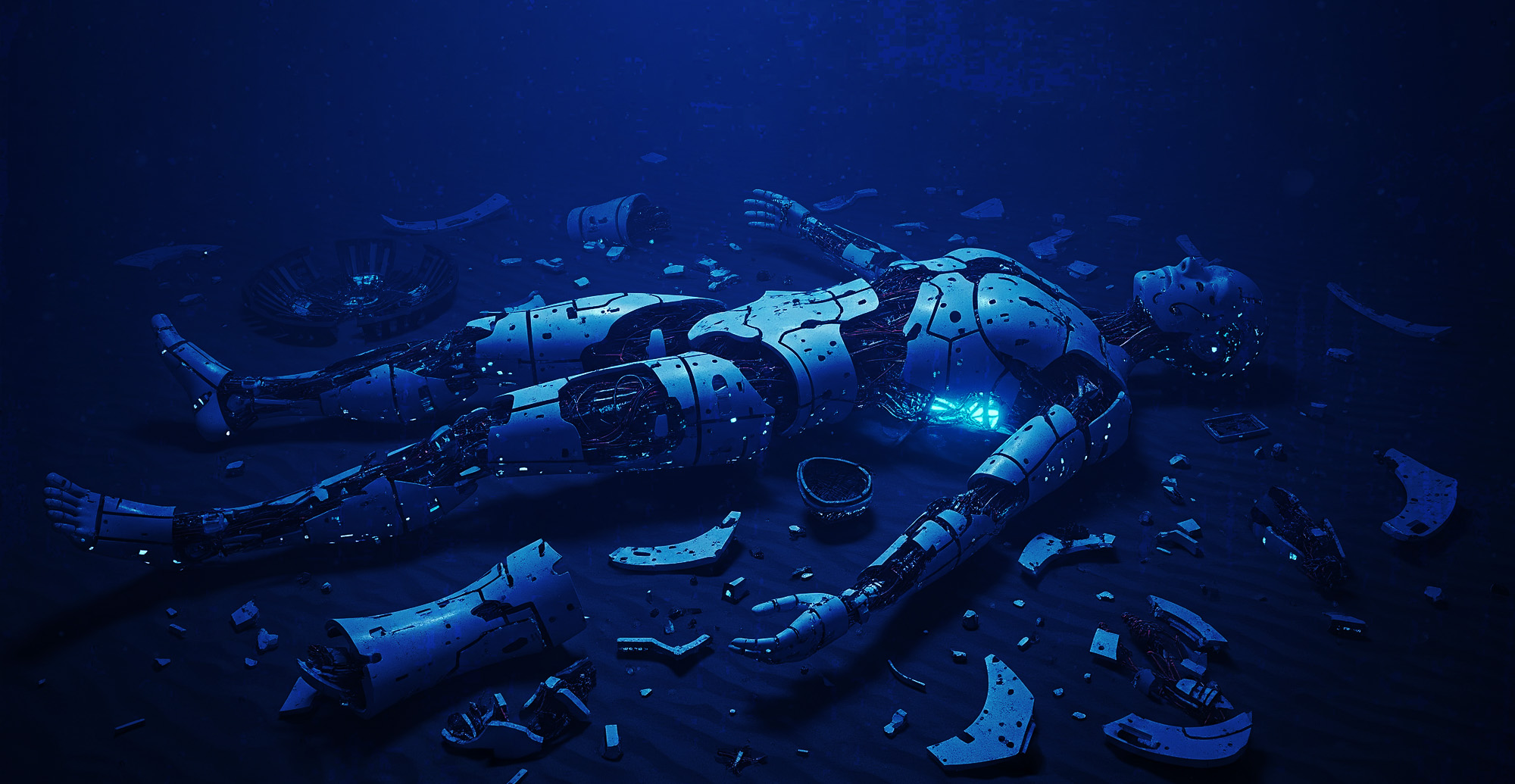

Here at The CyberPsych Institute (CPI), we also see some disconcerting and striking parallels in the context of (growing attitudes toward) heeding (or not) industry safety protocols and people-first standards in the fields of emergent tech development.

The Titan tragedy offers an important chance for us to step back and ask:

- How can we support innovation while safeguarding the people it touches?

- And how do we ensure responsibility scales with ambition?

While the fields of deep-sea exploration and AI development may seem worlds apart, they share this common challenge:

How do we ensure safety and accountability when innovation is moving faster than oversight?

Additionally, OceanGate’s safety-averse mindsets (and leadership) allow us to see some unfolding parallels when juxtaposed against the development of emergent technologies industry space, such as:

- Pro-innovation centeredness

AI companies are building bold, novel technologies capable of massive disruption and socio-economic impact. Rush also believed his bold, novel way of approaching Titan’s construction was genius and would revolutionize underwater travel - Lofty visions and “best intentions”

AI companies want and aspire to be the first to build Artificial General Intelligence or AGI. Likewise, Rush wasn’t just attempting to transform marine exploration; he believed that Titan’s successful underwater expeditions would prove that the deep ocean, and NOT space, would be humankind’s next frontier - Ethical and human-first standards aren’t always deemed important nor necessary

In emergent tech circles, there’s a growing climate that increasingly regards industry standards as bypassable or frames discussions on regulation or governance as limiting or “in the way of innovation.” Rush largely echoed these kinds of sentiments as well about regulation in his own industry.

With regard to the latter point, Rush was widely quoted disparaging safety stands in underwater transportation.

In 2019, for example, he explained his annoyance with submersible regulations to Smithsonian Magazine :

“It’s obscenely safe because they have all these regulations. But it also hasn’t innovated or grown — because they have all these regulations.”

Stockton Rush, Ocean Gate CEO (2019)

Rush made that statement in response when asked about the Passenger Vessel Safety Act (PVSA) of 1993 (H.R. 1159), which imposes rigorous new manufacturing and inspection requirements and prohibited dives below 150 feet.

More recently, Rush expressed clear disdain for industry safety with the next following quote made just three years later:

“At some point, safety just is pure waste. I mean, if you just want to be safe, don’t get out of bed, don’t get in your car, don’t do anything. At some point, you’re going to take some risk, and it really is a risk/reward question.”

Stockton Rush, Ocean Gate CEO (2022)

Unfortunately for OceanGate, Rush’s pro-innovation and risk/reward-only mindset across the years affected key operational decisions (and tainted views on safety) in significant ways with profound downstream effects on public trust and human safety.

Though what happened with Titan is not a one-to-one parallel with AI development, OceanGate’s mishandling of safety and people-first practices does offer us clear and cautionary considerations.

Similarities worth reflecting on

The Titan tragedy surfaced a few themes that also appear in fast-evolving sectors like AI.

These themes include:

- Voluntary standards and opt-outs

Most of the deep-sea industry follows third-party certification; even though it’s not legally required. OceanGate didn’t. Similarly, some AI companies operate with only internal ethics guidelines, without formal oversight or external review. And others develop without internal ethics at all. - Innovation vs. regulation tension

OceanGate’s CEO believed certification would hinder innovation. In AI, some argue that regulation could slow progress or stifle global competitiveness. But thoughtful safety frameworks don’t have to halt momentum — they can enhance trust and stability. - Ignored or minimized warnings

Prior to the Titan’s dive, experts raised design and safety concerns. In AI, ethicists, researchers, and even former employees have voiced unease about opaque systems, rapid deployment, and high-stakes applications — concerns that are vocally dismissed or brushed off as alarmist. - Trust without transparency

Passengers aboard Titan trusted the system. A swath of AI users WANT to trust AI systems; some might trust more than others but not everyone trusts AI and further mistrust the companies that make them. Such perceptions exacerbate without full visibility into how these systems work, how they’re tested, or how they impact lives at scale.

None of this writing intends to cast blame. Instead, it’s more about recognizing important patterns that can help us build better, safer, and more human-centered technologies going forward.

Suggestions for responsible progress

At CPI, we believe in innovation with integrity. Drawing from the considerable lessons of Titan, here are several guiding ideas for how developers, leaders, and communities can move forward together:

- Design transparency into development

Share not just what a system does, but how it was built and tested; especially when it impacts people’s rights, choices, or opportunities. - Make safety review a cultural norm, not a constraint

In high-stakes technologies, third-party review and independent testing should be viewed as value-adds, not hurdles. When trust is on the line, transparency strengthens markets. - Encourage diverse voices and early feedback

Whistleblowers, ethicists, users, and domain experts all provide critical insight; not to complain or impede progress but to improve holistic outcomes. Their feedback is especially insightful in identifying important blind spots internal teams may have overlooked or not considered. - Balance speed with societal readiness

Just because a technology can be deployed doesn’t always mean it should be. Take time to understand real-world impact, especially when systems and their intended populations come together; their interconnectedness may produce unforeseen reactions or impact (as we recently saw when OpenAI launched their ChatGPT 5 to unexpected user backlash) - Support policy that invites shared responsibility

Thoughtful policy doesn’t mean stopping innovation. It means building collective guardrails so that risk and responsibility are distributed — not concentrated in just a few hands.

A role for all of us

Whether you’re a developer, policymaker, educator, entrepreneur, or just someone curious about how AI will shape the future and you absolutely have a role to play.

Responsible, ethical, and people-first technology isn’t just “a technical task;” it’s a humane decision.

The lessons from Titan ask us to consider this:

What do we owe each other when we design systems that reach beyond ourselves?

The answer –> We owe transparency, care for human life, and responsible accountability.

These values don’t “slow innovation;” they make innovation last beyond its novelty.

(We recognize drawing comparisons to the Titan tragedy may be uncomfortable for some readers, especially given the profound loss of life. Our hearts remain with the families and loved ones affected by this heartbreaking event. This article is offered in the spirit of reflection and learning, not to sensationalize or diminish the human cost of that tragedy.)

Mayra Ruiz-McPherson, PhD(c), MA, MFA

Executive Director & Founder

The CyberPsych Institute (CPI)

Empowering Minds for the AI Age